The most comprehensive marketplace for data center solutions.

Company

Quick Links

Marketplace

Latest News

Sitemap

Solution

Support

Datacentermart.com. All rights reserved

Home

Community

Marketplace

Get Quote

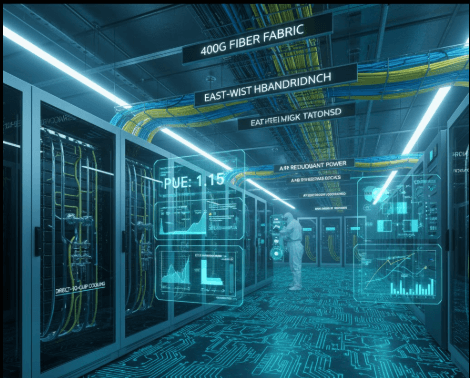

The rapid expansion of artificial intelligence has dramatically reshaped modern data centers. As organizations increasingly rely on GPUs to power machine learning, deep learning, and large language models, infrastructure demands have reached unprecedented levels. High-density computing layout designing for GPU AI workloads has become a critical requirement rather than an optional enhancement. Traditional data center layouts were never built to support the extreme power density, cooling intensity, and networking performance that GPU-driven AI workloads demand today.High-density computing layout designing for GPU AI workloads focuses on maximizing performance while maintaining operational stability. It enables organizations to deploy more computing power in a smaller footprint without sacrificing reliability or efficiency. As AI adoption accelerates across industries such as healthcare, finance, autonomous systems, and cloud services, the importance of intelligent layout design continues to grow.

GPU AI workloads differ significantly from conventional enterprise applications. AI training and inference processes require massive parallel computation, continuous data movement, and high memory bandwidth. These requirements push hardware utilization to the extreme, leading to much higher rack densities than CPU-based environments. High-density computing layout designing for GPU AI workloads addresses these challenges by aligning physical infrastructure with computational demands.Modern GPU data center layout design prioritizes performance consistency and thermal stability. Without proper layout planning, GPUs may throttle performance due to heat or power limitations, reducing the effectiveness of AI systems. This makes layout design a foundational aspect of AI infrastructure design rather than an afterthought.

Power delivery is one of the most critical aspects of high-density computing layout designing for GPU AI workloads. GPU servers consume significantly more power per rack, often exceeding traditional limits. A poorly planned power layout can lead to overloads, inefficiencies, and downtime.Effective AI infrastructure design ensures that power distribution systems are scalable and redundant. Transformers, UPS systems, and PDUs must be positioned strategically to support increasing GPU density. High-density computing layout designing for GPU AI workloads ensures that power paths are balanced and capable of handling peak loads without failure. This approach not only improves reliability but also simplifies future expansion.

Cooling is a defining factor in the success of GPU-intensive data centers. High-performance GPUs generate substantial heat, and traditional cooling methods struggle to keep up at higher densities. High-density computing layout designing for GPU AI workloads integrates advanced data center thermal management strategies to maintain safe operating temperatures.Proper airflow planning, containment strategies, and liquid cooling integration are often necessary to support dense GPU deployments. GPU cluster design must align server placement with cooling zones to prevent hotspots. By embedding thermal efficiency into the layout itself, organizations can reduce energy consumption and extend hardware lifespan.

Liquid cooling is rapidly becoming a standard component of high-density computing layout designing for GPU AI workloads. As air cooling reaches its limits, liquid-based solutions offer higher heat removal efficiency and better space utilization. Integrating liquid cooling into GPU data center layout design requires careful coordination between racks, piping infrastructure, and maintenance access.When implemented correctly, liquid cooling significantly improves data center thermal management while enabling higher rack densities. This makes it a key enabler for next-generation AI infrastructure design.

Efficient use of physical space is essential in high-density environments. High-density computing layout designing for GPU AI workloads focuses on optimizing rack placement, floor loading, and service clearances. GPU servers are heavier and more compact, requiring reinforced flooring and precise spacing.A well-planned GPU cluster design reduces cable congestion, improves airflow, and simplifies maintenance. By aligning rack orientation with cooling and power distribution, organizations can deploy more GPUs without expanding their data center footprint. This level of spatial efficiency is crucial for scaling AI operations sustainably.

AI workloads rely on rapid data exchange between GPUs. Network latency and bandwidth limitations can severely impact performance. High-density computing layout designing for GPU AI workloads ensures that network components are physically positioned to support ultra-low latency communication.High-speed interconnects such as InfiniBand and advanced Ethernet fabrics are central to GPU data center layout design. Placing network switches closer to GPU racks minimizes cable length and signal degradation. This integrated approach to GPU cluster design supports faster training times and more efficient inference operations.

One of the defining characteristics of effective high-density computing layout designing for GPU AI workloads is scalability. AI technology evolves rapidly, and infrastructure must adapt just as quickly. Layouts that are rigid or over-optimized for current hardware can become obsolete within a few years.Forward-looking AI infrastructure design incorporates modular layouts and expansion-ready power and cooling systems. This allows organizations to integrate newer GPU generations without major redesigns. High-density computing layout designing for GPU AI workloads that prioritizes flexibility ensures long-term value and reduced operational disruption.

Energy consumption is a growing concern in GPU-heavy data centers. Without optimization, AI workloads can drive operational costs and environmental impact to unsustainable levels. High-density computing layout designing for GPU AI workloads improves efficiency by minimizing energy losses and optimizing cooling performance.Advanced data center thermal management techniques reduce the need for excessive cooling, while efficient power layouts lower overall energy waste. Sustainable GPU data center layout design not only supports corporate environmental goals but also enhances long-term cost efficiency.

AI systems often support critical business operations, making reliability non-negotiable. High-density computing layout designing for GPU AI workloads enhances uptime by incorporating redundancy at every level. Power feeds, cooling paths, and network routes are designed to tolerate failures without disrupting operations.Segmented layout zones and fault-isolated GPU cluster design reduce the impact of localized issues. This approach ensures that AI workloads continue running even during maintenance or unexpected failures, reinforcing trust in AI-driven systems.

As AI models grow in complexity and scale, demand for GPU density will continue to rise. Innovations in cooling, power delivery, and automation will further influence high-density computing layout designing for GPU AI workloads. AI-driven monitoring systems and predictive maintenance will become integral parts of future AI infrastructure design.Organizations that invest early in intelligent GPU data center layout design will be better positioned to adopt emerging technologies. High-density computing layout designing for GPU AI workloads will remain a cornerstone of competitive, high-performance AI environments.

What is high-density computing layout designing for GPU AI workloads?

High-density computing layout designing for GPU AI workloads focuses on planning data center space, power, cooling, and networking to efficiently support GPU-based artificial intelligence systems.

Why is high-density computing important for GPU AI workloads?

GPU AI workloads require high power and cooling capacity. High-density layouts ensure optimal performance, stability, and efficient resource utilization.

Do high-density computing layouts help reduce operational costs?

Yes.Optimized power distribution, efficient cooling, and improved space utilization help reduce energy consumption and overall operational costs.

Are high-density computing layouts suitable for all data centers?

High-density computing layouts are ideal for data centers running GPU-intensive AI workloads and can be adapted for both new and existing facilities.

Is high-density computing layout designing future-ready for AI growth?

Yes. These layouts are designed to scale with future GPU upgrades, higher power densities, and evolving AI infrastructure requirements.